The video discusses how artificial intelligence (AI) is revolutionizing foundational scientific research by impacting the formulation of problems and the discovery process. AI has the potential to dramatically enhance scientific inquiry by leveraging vast data-processing capabilities, transforming the way researchers analyze and blend insights across diverse fields such as physics, biology, and chemistry. Eric Horwitz, Microsoft's Chief Scientific Officer, outlines the immense impact AI can have by speeding up the development of new materials and pharmaceuticals, and by guiding scientists in conducting more effective experiments.

AI's potential is also highlighted in its ability to synthesize information across various domains, transcending traditional disciplinary boundaries. Examples provided include AI's role in generating materials science innovations and discovering biological interactions and functions that were previously unknown. These advancements illustrate AI's capacity to act as a comprehensive tool for detailed analysis and fostering new breakthroughs by bridging specialties that were once disparate.

Main takeaways from the video:

Please remember to turn on the CC button to view the subtitles.

Key Vocabularies and Common Phrases:

1. foundational [faʊnˈdeɪʃənl] - (adjective) - Something that serves as an underlying basis or principle. - Synonyms: (basic, fundamental, core)

How might AI impact foundational scientific research?

2. empirical [ɛmˈpɪrɪkl] - (adjective) - Based on observation or experience rather than theory or pure logic. - Synonyms: (observational, practical, experiential)

...the painstaking accumulation of empirical data from which our capacity to spot patterns has resulted...

3. cross fertilization [krɔs ˌfɜrtəlaɪˈzeɪʃən] - (noun) - The mixing of ideas, cultures, or concepts which enriches understanding and innovation. - Synonyms: (interchange, interaction, collaboration)

...facilitate a really deep cross fertilization that will surely yield startling connections.

4. amplification [ˌæmpləfɪˈkeɪʃən] - (noun) - The action of making something more marked or intense. - Synonyms: (intensification, expansion, enlargement)

In some ways, you might say that we see an amplification and an incredible acceleration...

5. winnowing [ˈwɪnoʊɪŋ] - (noun) - The process of separating desirable elements from a group. - Synonyms: (sifting, filtering, narrowing)

...this winnowing process from your initial possibility of 30 million down...

6. synthesis [ˈsɪnθəsɪs] - (noun) - The combination of ideas to form a theory or system. - Synonyms: (integration, union, amalgamation)

And what might happen if these systems become the glue to bring specialties together? And what about sort of going the other direction? So synthesis is sort of putting things together

7. proteome [ˈproʊtiˌoʊm] - (noun) - The entire complement of proteins that is or can be expressed by a cell, tissue, or organism. - Synonyms: (protein expression, protein profile, protein architecture)

...it's so exciting to be in a situation where a group can say, here are all known proteins, and here are their estimated structures in the human proteome...

8. quasicrystals [ˈkwɑzɪˌkrɪstəlz] - (noun) - A structure that is ordered but not periodic, found both mathematically and rarely in nature. - Synonyms: (aperiodic crystals, non-repeating structures, complex crystal lattice)

An interesting example came up with this area called quasicrystals...

9. repurposing [riˈpɜrpəsɪŋ] - (noun) - Using something for a different purpose than its original intent. - Synonyms: (reassigning, reallocating, converting)

...for studies of repurposing. Can I use these existing drugs that have already been ok'd for clinical use on a disease they weren't targeted for?

10. hallucination [həˌluːsəˈneɪʃən] - (noun) - Perception in the absence of external stimulus that has qualities of real perception. - Synonyms: (illusion, delusion, mirage)

I mean, obviously, the whole issue of hallucination is probably not a good thing one would imagine, for.

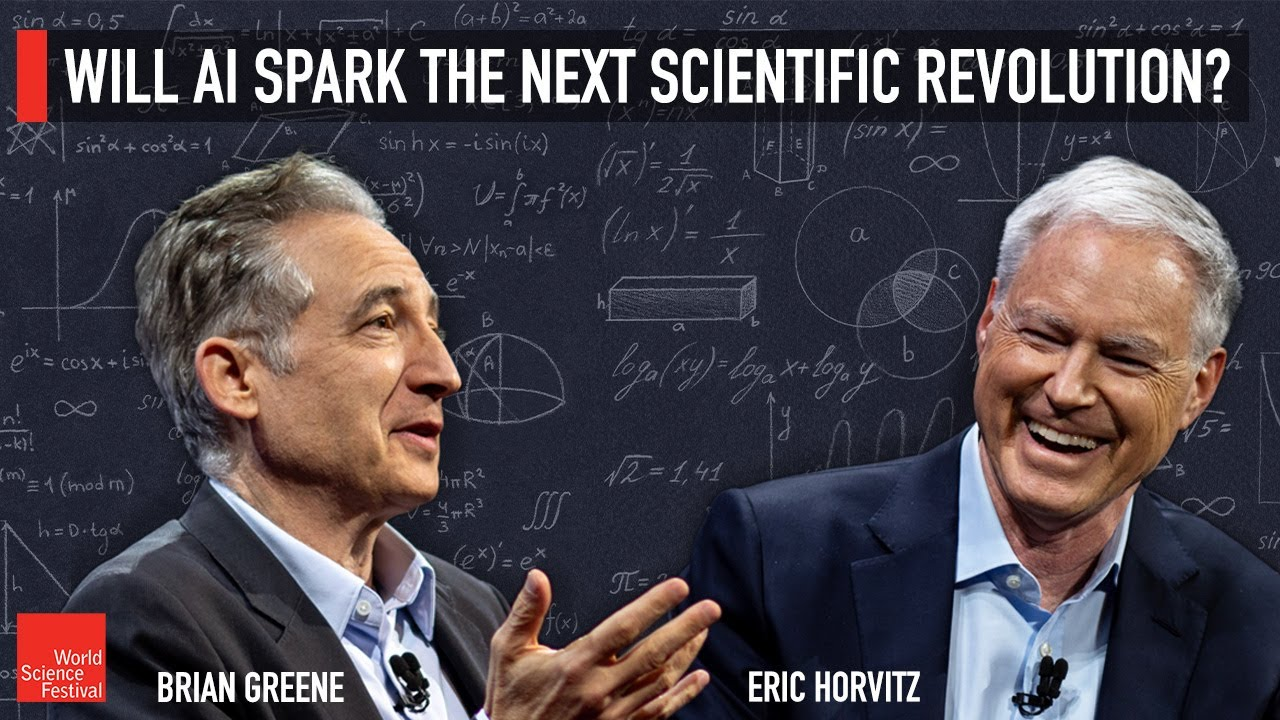

Will AI Spark the Next Scientific Revolution?

So thank you for joining us for this conversation about fundamental science in the age of artificial intelligence. You know, since November of 2022, we, of course, we have all become increasingly aware of the power of artificial intelligence to impact the traditional ways that we go about approaching the various tasks, from the mundane to the complex. But going well beyond these everyday impacts, how might AI impact foundational scientific research? How might it reshape the way we approach and solve complex problems? And at an even more basic level, the question is, how might it change the kinds of problems we can even formulate? Now, traditionally, of course, scientific inquiry has relied almost exclusively on human intuition. Right. Human physical insight, and of critical importance, the painstaking accumulation of empirical data from which our capacity to spot patterns has resulted in the formulation of the basic laws that guide the unfolding of all physical systems.

Now, AI may well offer a shift to this paradigm. Bye. Providing unparalleled data processing capabilities and an astounding, a truly astounding capacity to identify patterns that are simply beyond the ability of the minds of most, if not all, mortals to reveal machine learning algorithms rapidly analyzing vast data sets, they can enable researchers to explore new dimensions, consider new hypotheses, blend insights from diverse fields. And all of this undoubtedly will result in insights that would otherwise remain hidden. The transformative potential it extends across the various traditional disciplines, from physics and biology to chemistry. And beyond which, more than just enhancing what we scientists have long done, can facilitate a really deep cross fertilization that will surely yield startling connections. It frankly, it may even redefine the nature of scientific inquiry itself and to explore these exciting developments. Our conversation today is with an individual who has been a leading voice in pursuing these areas. And so I am so honored to invite Eric Horwitz to the stage, who is Microsoft's chief scientific officer.

He also serves on the president's Council of Advisors on Science and technology, where he recently co authored a report to President Biden called Supercharging Research, harnessing artificial intelligence to meet global challenges. Great to be here, Eric. Great to see you. So in a few minutes, I want to get into some concrete case studies. So this doesn't just stay at this sort of abstract level, but as sort of a context setting part of our discussion. What are the general ways in which you anticipate or already see AI really having an impact on the way that we do fundamental science? In some ways, you might say that we see an amplification and an incredible acceleration, a speed up of some of the things we've done in the past. So, for example, where it might take several years of analysis and compute to go down and to lay out possibilities for new materials or new pharmaceuticals and get down to one that actually is promising and then further converge on something that really makes a difference, going from years to months, even to weeks, because the system can actually now generate and scope down possibilities and then help us not just go through and do the final end run game to the working compounds or the promising compounds.

The systems can even guide us now as to what the best experiments might be. Now, these experiments, the wet lab, that's the, the slow, tedious part of projects, takes a lot of resources to have systems that can actually compute the value of doing different experiments and guiding us. There can be time saving and money saving. And what about synthesizing areas of research that you may have an expert over here, you have an expert over there? There are very few people. I mean, some can, but not many people can cross a wide range of disciplines, but these systems really don't know any boundaries in the kind of data that they can be trained on. So are we seeing the capacity for things coming together in a way that we would never have anticipated? You know, back when my team got access to GPT four, back in August of 2022, you mentioned November when there was. Yeah, we were a bit late in the general public, and that was 3.5. You saw four in February. Our reaction was the one word that I had in playing with the system, Washington polymathic. You would need a room of experts to even do what the system was doing across fields.

I think we're just starting to see glimmers of what that might mean for the sciences. We've done such great work over the decades of specializing where the best people are the specialists. And what might happen if these systems become the glue to bring specialties together? And what about sort of going the other direction? So synthesis is sort of putting things together. What we try to do as scientists is we see a pattern, and then we try to make this leap to say, okay, what does that tell us about the larger system as a whole? Can these systems, these artificial systems, do a good job at that? Can they take us to generalizations that perhaps we would never consider and think about the magic and almost the exhilaration that Isaac Newton must have had in realizing that this specialty observation of something falling near him? I'm not sure if the apple story is exactly true. Explaining the heavens, this incredible generalization in power. I do think these systems will help us do that kind of thing.

And again, starting to see glimmers of this kind of generalization ability to date. Most of what's going on in the sciences is pattern recognition, sorting, sifting, diagnosing. But we are seeing synthesis happening now and generalizations occurring. The systems seem to be becoming better and better at doing certain things. They seem to have induced interesting facts and patterns about the world, for example. This is something a little bit outside of physics and chemistry, but it's pretty clear to me these systems have induced what I would call a theory of mind to understand different points of view, to facilitate conversations, to understand, for example, after a patient diagnosis, what their questions might be from the point of view of the patient. It's unclear how they've learned that across incredible amounts of material, whether it be literature and fiction, nonfiction reports of various kinds, to figure out what people care about.

And how surprising is that is a question that occurs to me whenever these kinds of surprising behaviors emerge. Because if a system does train, as you say, on basically everything that we've written, so much, or at least a significant part of that literature has concerned itself with being a human being or interacting with other human beings, or having to anticipate what other human beings would say or do or feel or react. So should we be surprised, and should we look at this system and say, how did it do that? Or should we simply say, well, we did it, and it fed off of what we did? And therefore, yes, it makes great sense that it is doing that. There's been quite a big chasm between what it is that we do and what the systems we've built in the artificial intelligence field can do over the years. I, like many of my colleagues, are stunned and amazed at the properties and powers we're seeing, with the capabilities that have come to the fore just over a year and a half right now with what's called generative AI models.

And what's interesting in some ways is that it's framing new questions and mysteries that might read on human minds. We're not sure yet, but it's certainly raising lots of questions. And it's the first time in a long time for me that I've been somewhat mystified by the systems we're building ourselves. Up until a couple of years ago, I had a really good sense for how everything worked, and now, and I reveled in that. But now it feels like the earliest days of studying neurobiology and neuroscience and being startled that parts turning upon parts give me any countable number of neurons. How could it possibly do these things? And let me just say, it's a conjecture but there may be a relationship, what we're seeing now, and that therefore, it might be a spotlight onto the future of where that's going.

I don't want to dwell on the following, but we've had some conversation that passed on this very stage where there have been researchers who are willing to ascribe a small amount. I don't know what that means of consciousness to these systems. I can't imagine that. But for someone who is actually in the thick of it, does that ever crop up in your thinking? Well, these discussions come up now and again. Look, we have to, at some point, not view vertebrate nervous systems as something from a different universe that I agree with or from the theological world. These are fabulously beautiful machines. We are, and as far as we know, those machines are doing computation of some kind. Whether it's beyond our ability to understand right now or not, it is. It doesn't mean that various capabilities that we see in ourselves might be explored someday in machines. Someday. Someday. It makes sense for people that are excited about that pathway to ask questions about what will or would that mean? We're not there yet, but many of us in artificial intelligence research, as well as in philosophy and neurobiology, if you get a couple of glasses of wine out and talk late at night, people are mystified by consciousness, and that's what brought them into, well, what on earth is going on at the mechanism level?

Sure. Yeah, absolutely. It is a deep question, and I do think that one day it may well be the case that we'll look at one of these systems and say, yeah, I think it is consciousness. And by the way, some people, there's one point of view on that topic that there's no such thing as consciousness. This is just the way it happens to be to have these computing capabilities. And if that's the case, then you can imagine someday with some impressive growth and scale of computing capabilities. That's just the way things are, right? Quite possible. So I want to get to some of those concrete examples that I just made reference to a moment ago, where we've already begun to see how these AI systems are just really transforming the way we go about doing various sciences.

So if we start with an example of materials science, I know that you have been involved in using these systems for this really important task of redesigning batteries and things of that sort. Can you tell us how you go about doing that and where you have found AI to change the way we go about doing that research? So teams at Microsoft work with the Pacific Northwest laboratories on the pursuit of less expensive batteries that might be powerful. One of the big challenges in sustainability is to store power from sunlight and from wind for when it's not available. So batteries are very important to figure out and build. What these two teams working together did was use AI methods built to go faster than what you might call traditional quantum mechanical or quantum chemistry computations, which would take many years to do, into weeks and days using neural networks that can approximate these interactions.

It's interesting to think about. It gets back into chemistry that many of us learned in high school and early college, where we had these electron shells, s, p and da. Well, it turns out for s and p, we can do pretty well if we have to worry about those shells. So it focuses us on certain compounds, but lets us go very fast. And so we started out with a system that could generate, take electrolytes of the form that are used now in lithium batteries and generate a family of about 30 million possibilities. It's too big to evaluate, and we don't know which ones would work. Then another pipeline or another set of AI technologies we used to bring it down further, looking at things like thermal stability and manufacturability, finally down to just a few hundred, that then you can sort of say, let's now pull out the heavy duty power of the classical computing methods.

Let's do density function computations on these. Let's really figure things out, down to just a handful that could be tested in the lab. And these are literal atomic structures that the system is spitting out and saying, here's a candidate, here's a candidate, here's a candidate. But if you went to actually test, what did you say the original number was? 30. 30 million. 30 million. That would potentially be a burden to do well. And this is what I meant earlier when I said that one of the big outcomes is going to be that we master these funnels or pipelines, which kind of AI technology. How do you hybridize it with classical methods that might be expensive, but figure out when to use them and come down into a way to have a material science discovery pipeline.

Now, think about materials, right? This is really central in the history of humanity. We had the Stone Age and the Bronze Age. These were materials. And here we are becoming masters of new kinds of materials that might change the nature of what a skyline looks like someday. And so when you follow that winnowing process from your initial possibility of 30 million down, did it then settle on a few or one as the candidate? When you even included the classical computational means at the end came down to just a little, a handful, and then to one, to one. So to one candidate, that basically is a compound that is using 70% less lithium. It replaces it with sodium, but a particular structure, it's much less expensive, and it looks quite powerful.

But is this theoretical, or is this something. Oh, no, no. This was built and is running machines now as we speak. Really. So this is, this is real stuff. This is real stuff. It's very exciting. It's actually really happening now. And so start to finish on that process to generate this new material. What sort of timescale, overall would that be? Several months. Several months, and it would have been years without these techno, without this technology. And this is the early days. We're just starting to master these funnels now. Imagine ten years from now, what they'll be like in generating new materials.

Amazing. Another example that certainly, I think some of us heard something about is in the biosciences, you know, alpha folders is one example, and we're actually going to be talking about that at some point in this series of conversations as well. Tell us something about that. But then there's another example in the bioscience realm that you've been involved with as well, having to withstand. So if we can just get a feel for any of that. So I think we've heard about alphafold, alphafold, two, three. There's a rosettafold, another set of technologies that are doing similar and related things from a different team. It's so exciting to be in a situation where a group can say, here are all known proteins, and here are their estimated structures in the human proteome or the eukaryotic podium.

The pace has been quick. I mean, structure is one piece of the interesting challenge in the biosciences, of course, the other piece is function. What do these things do? What's their molecular dynamics? How do they interact? Some of the work at University of Washington looked at computing the interaction among a whole lot of proteins in a eukaryotic cell happened to be yeast, but that's what we're built on. It's the same platform. And it's interesting that these previously unknown interactions have been brought to light in a computational microscope driven by AI technologies. And what really I found the most interesting about this project about interaction and function was that proteins were found to be interacting. And then when biologists looked at them, they said, we have no idea what those are doing, raising whole new questions and directions about how biology works.

But in both of these examples, both the battery example and this biological example, what training data are you using? Do you need to have a very carefully curated set of data in, say, the battery case so that it has all knowledge of x and it's not infinite, affected by knowledge of why? I mean, obviously, the whole issue of hallucination is probably not a good thing one would imagine, for. This is a really nice question. What was done in those two projects? Material science and in, let's say, a biology case. And I'll mention this Hallison example you just mentioned. Yeah, sure. A little bit different in terms of how training was done for the material science case. What's really impressive about some aspects of chemistry and physics based AI work these days is you can generate training data by paying for it.

You can compute shortage equations or estimates, and you have structures, and you compute ground truth or estimates based on running the physics so you could get training data. You crank the computational machinery of classical physics, but who's cranking that? Do you have like a team of quantum mechanical graduate students who. I mean, how do you do that? In part. But the community in chemistry has generated tools based on lots of folks adding to a database of computed known chemistries and the computed, we'll just say wave functions. And that can be used to basically start reasoning about and estimating other things that you haven't seen before, which is very interesting. Now, the Hallison situation is one where a large library of drugs that existed and a library that's been maintained for studies of repurposing.

Can I use these existing drugs that have already been ok'd for clinical use on a disease they weren't targeted for? Because we can learn more about what that pharmaceutical agent does. And in a project that had a similar kind of a funnel looking for antimicrobial structures and similarities, a chemical, or also called a medicinal compound that had been used for diabetes was discovered to have impressive antibiotic powers. That was named Hallison, by the way, to honor Hal from 2001 a space odyssey, believe it or not. I can't do that, Dave. Now you can say, I can do that. I can help fight that infection. More recently, I found. Let me just mention, while we're talking about discovering antibiotics, we're facing a crisis with drug resistance or antibiotic resistance in medicine right now, and the rates are rising on this.

And so a team at MIT under James Collins has done some recent work, even extending the Howison work to new understandings using what they refer to as explainable AI, where they build the same funnel, and then they try to visualize, to try to understand what's going on here. So think about the process they went through. They actually had ground truth data about known antibiotics and their structures that function well. They had ground truth on compounds that don't operate very well. They also had information on compounds that are cytotoxic to human cells versus that are non toxic. And you can create these spaces of find me a new antibiotic novel structure that's in the realm of a structure space that's not toxic to humans. And in this case, they discovered a new antibiotic and also can visualize a new mechanism that had never been understood before, that now can become the basis of new principles and understandings.

In this case, it was discovering a way that a chemical compound works. It literally shorts out the electrochemical motive force across membranes, so it sort of stops bacteria dead, it hits at their reactor, their energy source. So I think one of the wonders, and again, I'm on the outside of the field, so if somehow I'm more impressed by something that perhaps I shouldn't be, feel free to correct me. But when I see the output of large language models, what really I find incredibly impressive is that there isn't an underlying understanding of language or grammar, at least as my level of understanding. It's really based upon huge data sets finding the patterns and going forward from there.

In the case of drug development or material development, do you have a model of the external? I mean, does it know about how physics works? Or is it, again, simply enough data gives it enough capacity to work out statistics and can say with reasonable likelihood this particular molecular compound will do what you're trying to solve with that question. This question has been discussed quite a bit in the field. I believe that and many colleagues of mine, I think, share this belief that with these large systems, when you push them and you increase their scale, even give them a simple predictive task like predict the next token, the next pixel for image generation, the next word in language, the next chemical structure, the next amino acid in protein work, push them harder and harder to predict better and better.

To do that as best they can, these systems are inducing models of the world that might answer your question, no, they actually have a deeper. They're gaining a deeper understanding of the kinds of foundations that humans might be relying on. They're starting to understand they're building their own world model by having enough data to basically encapsulate it as you push them and optimize them to do a better prediction on a basic training task, to really, and I say push them harder and harder. The way I view it is you're inflating internally. The ideal compressions of how to do that well is through foundational understandings and principles. Now, this, to me, sounds like new science, a new paradigm, because what we're used to is we look at a few examples, and then we theorists say we run to the blackboard and we try to imagine writing down differential equations that might be able to model the thing that we're looking at, and then we use that differential equation to make further predictions.

But you're saying I put that to the side. Just get enough data, allow yourself to see the patterns just in the data without trying to encapsulate it with some mathematical formula, and just use that pattern to make the predictions that seems fundamentally different from what we're used to doing. Do you see it that way? Well, but it's not just predictions now with AI, right, it's new kinds of syntheses, new kinds of imagination. Absolutely happening. But we're at a point now, and it's not even clear yet, for example, how much better assistance will do when you actually give them what AI people call prior knowledge. Like give them the differential equations. That's what I'm wondering.

Give them a helping hand. Hey, we're humanity. We know a bunch. Let's make that a starting point versus having to induce all of that, for example. So I do think that it's not clear what the best mix for moving forward is in terms of human knowledge. But these things are learning quite a bit from, as well as the induction from large data sets. There's another example that I know that you've been involved with that has to do with closer to my area of study, physics. Can you tell us about this one, which is particularly fun, actually? Well, there's a couple of examples. It's interesting.

I met on the president's council of advisors on science, Technology, PCAST, the PCAST committee, Sol Perlmutter, who's a Nobel laureate, he does. You probably know him quite well. I do know him well. He studies gravity waves. He, Bud and other physicists. We started a little, have a little working group looking at, wow, can these models help us think through interesting challenge problems? And we've been working away. There have been some failures. I mean, after we showed name physicists what GPT four and Claude and other models said about thinking out of the box, about whether the universe was inflated versus bouncing cyclical universe, the reaction was, well, kind of like an ambitious graduate student.

And I was thinking, that sounds like Paul Steinhardt. I'm not going to say anything. I'm not going to say anything, but let me just say that if you told me five years ago that a leading physicist would say that's kind of like an ambitious freshman, I'd say, not any AI system that I know about. But an interesting example came up with this area called quasicrystals, and we were looking at this a bit. This is kind of an interesting aperiodic crystal structure that was proposed just a few years back and confirmed to exist. It was first proposed mathematically, anyway. It's very hard to find on the earth. It's been found in a meteorite, some rare places. And so a question was posed by a physicist.

Well, can you help me find an aluminum based quasicrystal on the earth? Where would I look? It was kind of interesting to basically have just a general large scale language model. Say, have you considered lightning strikes on bauxite mines, by the way? You might want to go to Venezuela. They have a lot of lightning, and they have a lot of bauxite mines that are on the surface. And so even the idea of, like, helping the scientists say, wow, that's a great. That's an interesting idea. And, you know, where are my travel tickets?

And so did that pan out? Well, this is brand new, so we'll have to see. So are you one of the people going to go and check on this? Well, I'm heading to Europe now, but maybe get a trip to Venezuela and help out with that mission. Yeah, that would be kind of spectacular. Now, these are potential and realized already success stories. What's the concern? I mean, do you have any of where this may lead us into places that we consider unfortunate? Well, like fire, like any technology that humans have created, almost everything we create is dual use. Now, I think humans, homo sapiens, have done a great look at the world we're right now, the civilization.

How incredibly well, we've pressed our advances into valuable technologies that we use in daily life that have changed the world, that have given us cities and electricity, Internet changed the whole way we do healthcare, even our own language is a tool that we invented that we now use to coordinate. Yes, AI can be. The powers of AI can be harnessed in malevolent ways by malicious actors. For example, in biology, you can imagine a biologist using protein design tools to design new toxins of various kinds. I believe that we need to be vigilant to stay on top of this. People may have seen in the president's executive order on AI, there's actually an explicit call out on a number of dual use situations and how we might go forward and address them, including biosecurity.

What kinds of requirements should we have on devices that generate DNA for scientists to make proteins? How do we screen them to make sure they're not doing something, that they're not being used by somebody with ill intentions? And I think we'll be able to stay on top of it. I'm quite hopeful and optimistic, and we're all, of course, we see the power of the imaginative systems and how they're being used to generate disinformation, deepfakes and so on. Again, there are technologies being developed to try to counter that and to keep us on the track. And if you were to look forward, what would you consider the big prize? Is there one particular place where if AI can take us, you'll say, that was the place where I had hoped we'd get well.

I'd like to see AI harness to come up with solutions and mitigations to numerous diseases we face to increase our longevity, to be pressed into new approaches in education, to bring education to the masses, to give us extend our understandings of the universe. I think we're seeing the sparks now, or the glimmers of the possibilities. Look, I mean, for me personally, the ultimate goal that I've been pursuing for my scholarship is understanding the human mind, understanding ourselves. This is the first time, although I pursued this for decades, that I'm seeing what I would say are entrees into questions we might ask about large scale systems and their behaviors and their ability to synthesize, generalize, abstract, compose in new ways that seem rather on the path to human like behaviors.

I'm hoping that the tools we build and the new approaches to understanding these systems will actually lead in some ways to a merger of what we call neuroscience and aihdenhe and bring us to new understandings of the mind. I share that as an amazing opportunity and an amazing goal. There's one final topic related to exactly what you just said that I want to speak about, which is on this stage, we've had a variety of conversations with people from a variety of different disciplines, and part of the conversation at times has asked the question, can the AI systems, as currently built, can they be creative? One point of view is, absolutely no, of course not. All they do is mash up the things from the past, and so all you're doing is rearranging the pieces.

And how could that possibly be creative? Others have a radically different view of what this kind of blending and synthesizing can yield. Where do you come down? And especially where do you come down? When you think about creativity and science, I believe these systems are impressively creative, and they will become even more so, even more imaginative over time. And I'll also ask the question. If you say that system is just combining ideas of various kinds, isn't that what we do? Our most creative folks, even when we're thinking out of the box, we're combining thinking patterns and methods of various kinds.

In the same way, I would say to everybody, even the people who are naysayers right now, about creativity in these machines, hold onto your seats, because we are on a curve. It's likely an exponential right now in terms of capabilities we're seeing. And check back with me in 18 months. Just 18 months? Yes. Is there something specific you wanted to tell us about? I just think that's the pace one right now. I think we all have a sense that we're coming up onto a new plateau. Like, why isn't this cool? What do we do with this material science? Bioscience? This is fabulous, but we're actually riding a different kind of wave right now.

And my hope is that we act quickly enough to harness these methods in new magical ways for humanity. We watch out for the rough edges so we can get the most out of these systems. And then there's a deeper notion about how will these powers of intellect we're seeing in machines interleave with society more deeply and broadly over time? Do you worry at all, do you worry at all the role that we'll have, say, in pressing the frontiers of science, if there are these creative AI systems that can harness so much more information and see such patterns that we are unable to even imagine going to make us obsolete in this realm? Well, I like to think about this in terms of, these are tools that we've created.

They'll probably have as powerful effect on humanity as our invention of language has had in terms of how our culture evolves moving forward? You know, these days, I ask myself the question, not like, will we become obsolete? I say something more like an assertion. It's time to assert the primacy of human agency and to think how these systems will serve us. And that's the perspective I think we need to take as these machines gain in power. They're serving humanity. They're serving us. They'll be incredible tools. We are in a transformational time, and this is how it feels to be in a transformational time.

I often say that, you know, looking back upon this period of time, from a thousand years from now, the next 50 years will have a name. I'm not sure what the name will be, but it'll be noticed in the history. Right. This will really matter. Yes. So it's either this way, this way, or something get thought of. But it's going to be transformational. Yeah. Fantastic. Thank you so much. Really appreciate that.

Artificial Intelligence, Science, Technology, Innovation, Scientific Inquiry, Cross Disciplinary Research, World Science Festival